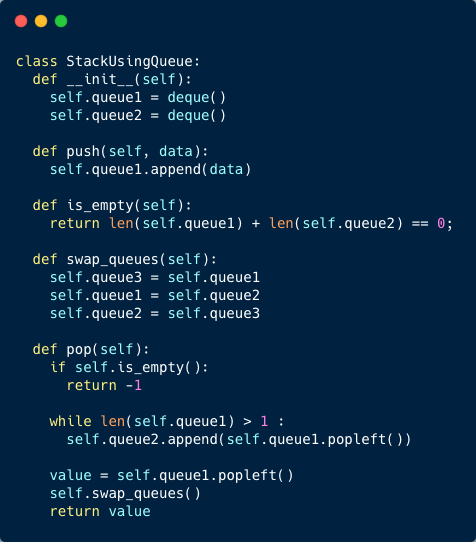

Stack Using Queues

Implement a stack using Queues.

Imagine we have a queue class that provides all common operations such as enqueue, dequeue, and size. Using the instances of this queue class, implement a stack class with its push, pop, and isEmpty operations.

push(): Constant Runtime, O(1).

pop(): Linear Runtime, O(n).

Linear, O(n) Memory Complexity

A stack is a data structure in which objects are inserted and removed according to the LIFO(Last In First Out) principle. An item is added at the top of the stack and removed from the top as well. push means to insert an item at the top of the stack and pop means to remove an item from the top of the stack.

A queue is a data structure in which objects are inserted and removed according to the FIFO(First In First Out) principle. An item is added at the back of the queue and removed from the front. enqueue means to insert an item at the back of the queue and dequeue means to remove an item from the front of the queue.

The first solution will make the ‘push’ operation faster. A good question to ask the interviewer is: what operation needs to be faster in the stack implementation? We will maintain two queues named queue1 and queue2. Let’s see what our push and pop operations look like:

- push():

- Always enqueue on queue1

- pop():

- If stack size is 0, throw exception.

- If queue1 has element(s), dequeue all elements from queue1 and enqueue on queue2 except the last element.

- The last element found above will be returned but before that swap queue1 and queue2 references.

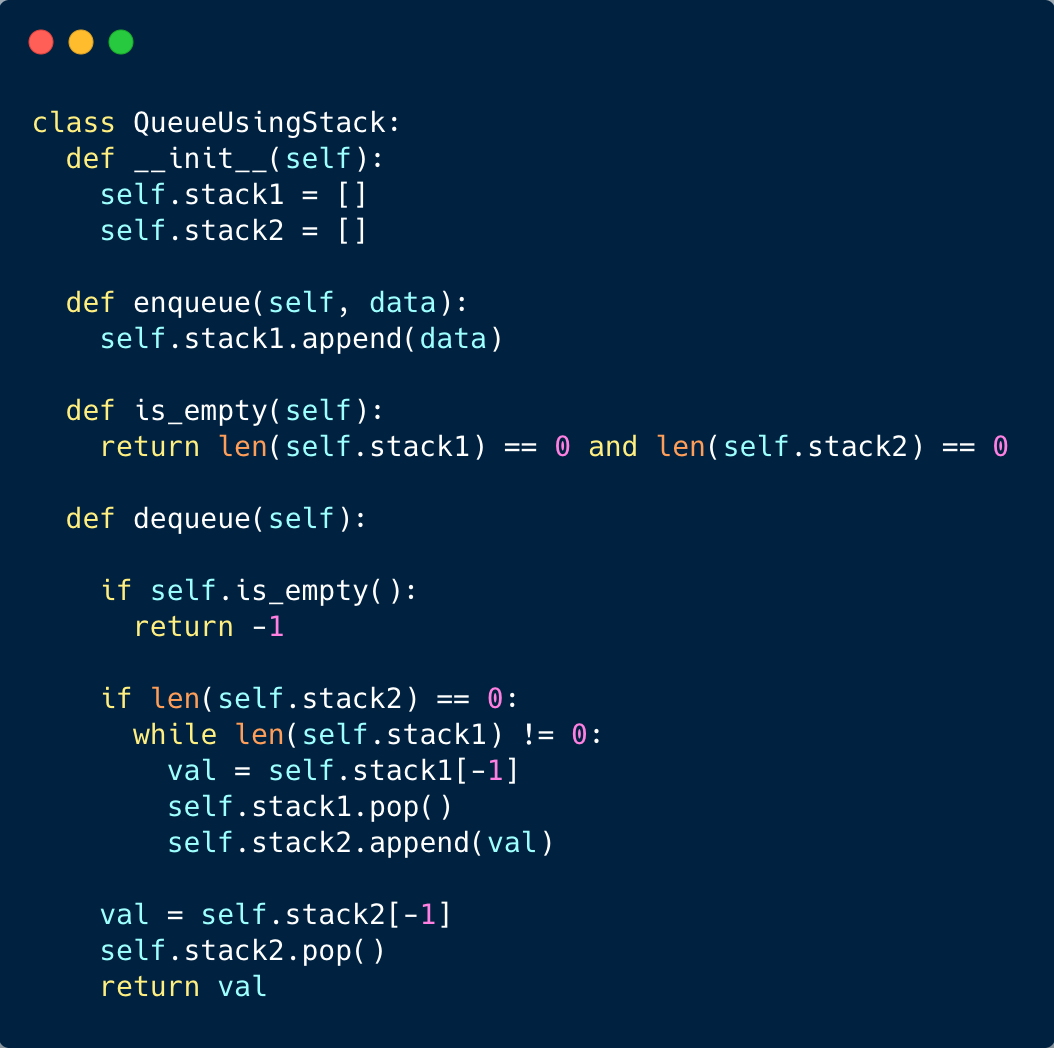

Queue Using Stacks

Implement a queue using Stacks.

Imagine we have a stack class that provides all common operations like push, pop, isEmpty. Using the instances of this stack class, implement a queue class with its enqueue, dequeue, and isEmpty operations.

enqueue(): constant, O(1) runtime complexity.

dequeue(): linear, O(n) runtime complexity.

Linear, O(n) memory complexity.

A queue is a data structure in which objects are inserted and removed according to the FIFO(First In First Out) principle. An item is added at the back of the queue and removed from the front. enqueue means to insert an item at the back of the queue and dequeue means to remove an item from the front of the queue.

A stack is a data structure in which objects are inserted and removed according to the LIFO(Last In First Out) principle. An item is added at the top of the stack and removed from the top as well. push means to insert an item at the top of the stack and pop means to remove an item from the top of the stack.

The first solution will make the ‘enqueue’ operation faster. A good question to ask the interviewer is: what operation needs to be faster on the queue implementation? We will maintain two stacks named stack1 and stack2. Let’s see what our enqueue and dequeue operations look like:

- enqueue():

- Always push on stack1

- dequeue():

- If queue size is 0, throw exception.

- If stack2 has element(s), pop the topmost and return.

- Otherwise If stack1 is non empty, pop all elements from stack1 and push them in stack2.

- At the end pop stack2’s top most element and return.

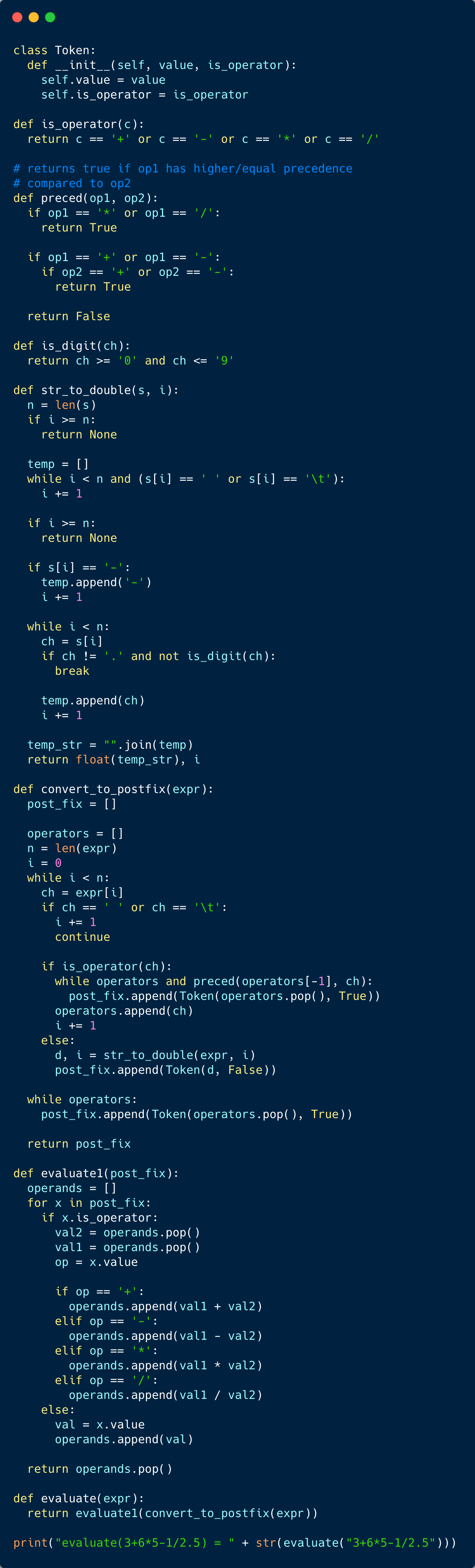

Expression Evaluation

Given an arithmetic expression, evaluate it i.e. calculate its result. Let’s assume that there are no parentheses in the expression and only binary operations ( +, -, *, / ) are allowed.

The following are a few examples of expressions and their results:

2+3=5

6 + 4 / 2 * 2 = 10

3+2.45/8=3.30625

Linear, O(n) Runtime Complexity

Linear, O(n) Memory Complexity

Arithmetic expressions can be written in the following three forms.

- Infix: Operators are written between the operands, i.e. A + B

- Prefix: Operators are written before the operands, i.e. + A B

- Postfix: Operators are written after the operands, i.e. A B +

Infix notation is the usual way of writing expressions and is easy to understand by humans. However, they are harder for computers to evaluate because of the additional work needed to decide precedence.

In solution 1, we’ll use the reverse polish notation to evaluate the expression. We’ll use two stacks, one for operators and one for operands. First, we’ll convert the expression into its postfix notation using an operators stack and then we’ll evaluate that postfix expression using an operands stack.

The algorithm for infix to postfix conversion is as follows:

- Initialize a new postfix expression

- Initialize a stack of operators

- Parse the string expression character by charcacter

- while not end of expression

- if the current character is an operator

- while operators stack is not empty AND the operator at the top of stack has higher or equal precedence

- pop the operator at the top and add it to the postfix expression

- push the operator onto the stack

- while operators stack is not empty AND the operator at the top of stack has higher or equal precedence

- otherwise if the current character is an operand

- add it to the postfix expression

- if the current character is an operator

- while stack is not empty

- pop the operator from the top and add it to the postfix expression

The algorithm to evaluate a postfix expression is as follows.

- Initialize a stack of operands

- Parse the postfix expression token by token

- while not end of postfix expression

- if the token is an operator

- pop two operands from the stack

- apply the operator on the operands

- push the result onto the operands stack

- otherwise if the token is an operand

- push it onto the operands stack

- if the token is an operator

- result is the topmost element of the operands stack

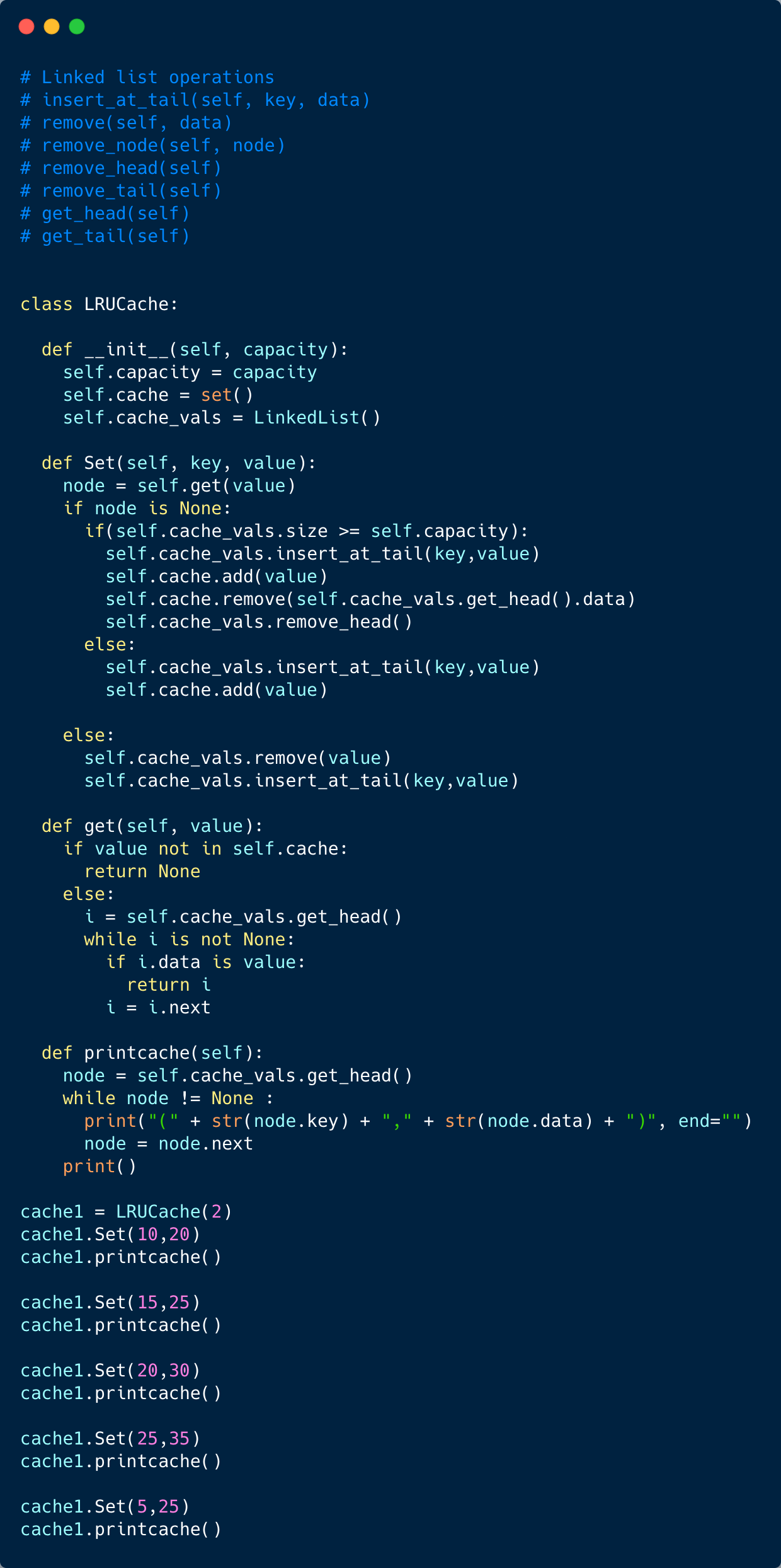

Implement LRU Cache

Least Recently Used (LRU) is a common caching strategy. It defines the policy to evict elements from the cache to make room for new elements when the cache is full, meaning it discards the least recently used items first.

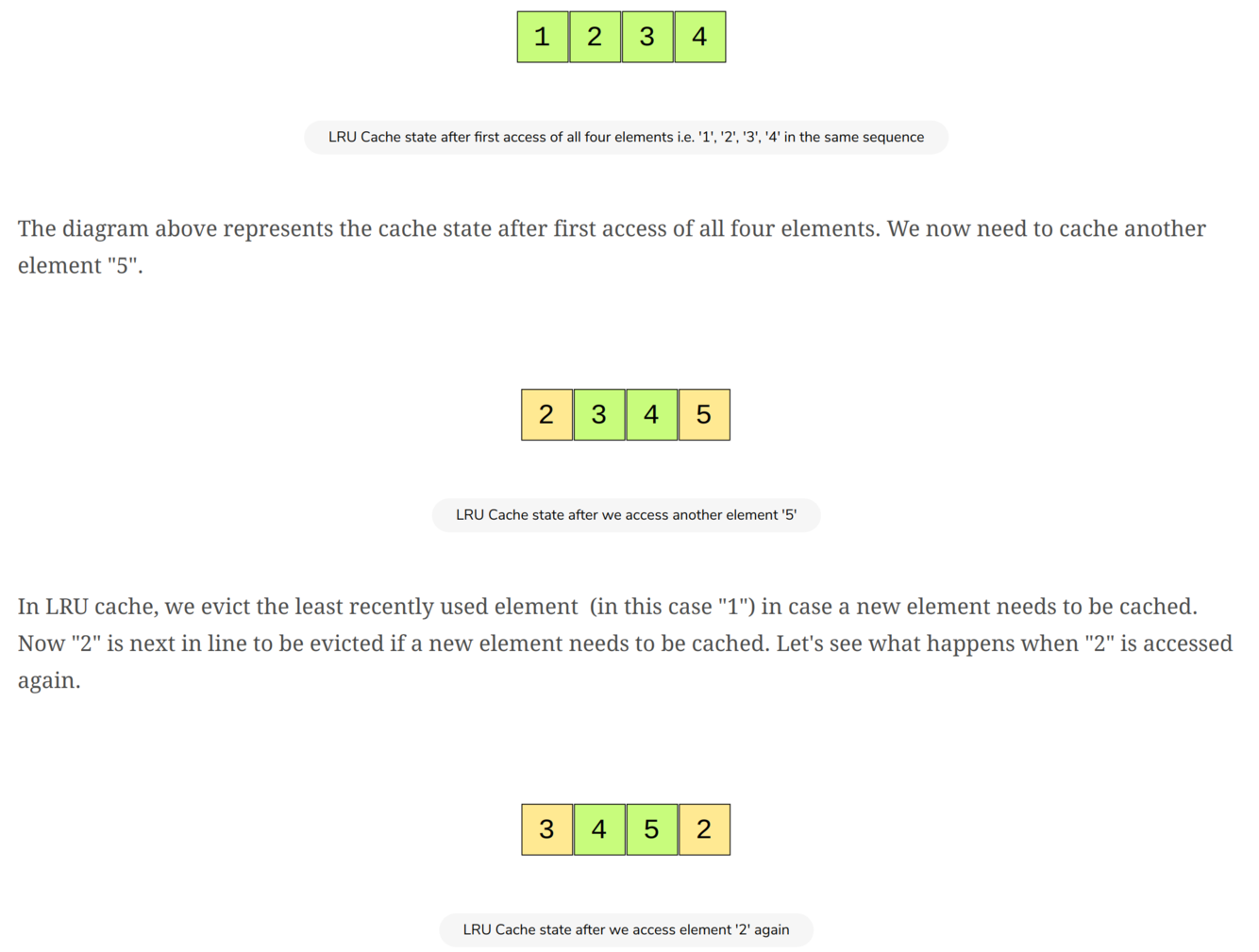

Let’s take an example of a cache that has a capacity of 4 elements. We cache elements 1, 2, 3 and 4.

Runtime Complexity

get (hashset): O(1) in the average case, O(n) in the worst case

set (hashset): Constant, O(1)

deletion at head when adding a new element (linked list): Constant, O(1)

search for deleting and adding to tail (linked list): O(n)

Complexity: O(n)

Memory Complexity

Linear, O(n) where n is the size of cache.

Caching is a technique to store data in a faster storage (usually RAM) to serve future requests faster. Below are some common examples where cache is used:

A processor cache is used to read data faster from main memory (RAM).

Cache in RAM can be used to store part of disk data in RAM and serve future requests faster.

Network responses can be cached in RAM to avoid too many network calls.

However, cache store is usually not big enough to store the full data set. So we need to evict data from the cache whenever it becomes full. There are a number of caching algorithms to implement a cache eviction policy. LRU is very simple and a commonly used algorithm. The core concept of the LRU algorithm is to evict the oldest data from the cache to accommodate more data.

To implement an LRU cache we use two data structures: a hashmap and a doubly linked list. A doubly linked list helps in maintaining the eviction order and a hashmap helps with O(1) lookup of cached keys. Here goes the algorithm for LRU cache.

- If the element exists in hashmap

- move the accessed element to the tail of the linked list

- Otherwise,

- if eviction is needed i.e. cache is already full

- Remove the head element from doubly linked list and delete its hashmap entry

- Add the new element at the tail of linked list and in hashmap

- if eviction is needed i.e. cache is already full

- Get from Cache and Return

Note that the doubly linked list is used to keep track of the most recently accessed elements. The element at the tail of the doubly linked list is the most recently accessed element. All newly inserted elements (in put) go the tail of the list. Similarly, any element accessed (in get operation) goes to the tail of the list.